The AI boom has created no shortage of flashy products, but the teams that usually last are the ones solving the painful infrastructure problems underneath the surface. That is where Sri Raghu Malireddi and Moss stand out.

At a glance, Moss sounds like another company in the crowded AI tooling space. Look a little closer, though, and the story becomes more interesting. Moss is focused on real-time semantic search for conversational AI, a part of the stack that matters far more than most end users realize. When an AI agent, voice assistant, or copilot feels fast, relevant, and natural, it is usually because the retrieval layer is doing its job well. When it feels slow or awkward, retrieval is often where things start breaking down.

That is the gap Sri Raghu Malireddi appears to be going after with Moss. Instead of building a broad, vague AI platform, he and the team have built around a specific bottleneck in modern AI infrastructure: how to retrieve useful context in real time without making users wait. In a market full of oversized promises, that kind of focus is often what turns an early startup into one worth watching.

Why AI agents need real time search more than ever

The promise of AI agents is simple enough. They should understand context, find the right information quickly, and respond in a way that feels helpful rather than mechanical. In practice, that only works when the system can retrieve the right data almost instantly.

That is where many products struggle. A lot of traditional vector search and retrieval pipelines were not built for a world where people expect fluid back-and-forth conversations with software. They were acceptable when a few extra moments of waiting felt normal. They feel far less acceptable when someone is using voice AI, a chat interface, or a copilot that is supposed to behave like a responsive assistant.

Even a short delay can change the entire user experience. A pause in a voice interaction can make the product feel uncertain. A lag inside a copilot can make it feel unreliable. A slow answer from a context-aware AI system can break the sense that the software is actually paying attention.

That is why low-latency retrieval, semantic memory, and real-time context retrieval have become more important. This is not just a technical optimization anymore. It is part of the product itself.

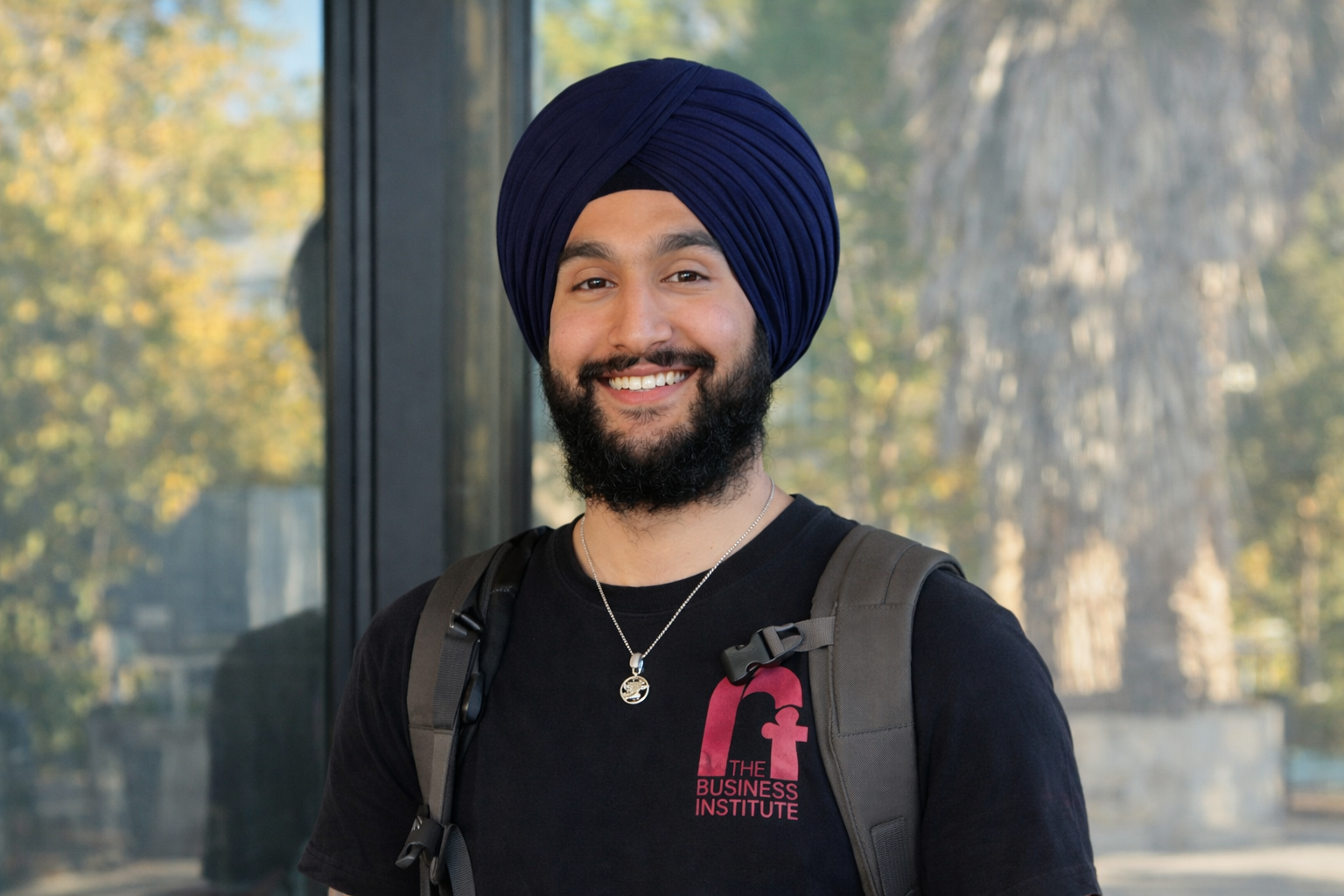

Sri Raghu Malireddi’s background gave Moss a strong starting point

Founder stories tend to be overpolished online, but in this case the background behind Moss actually fits the problem the company is trying to solve.

Public profiles around Sri Raghu Malireddi connect him with machine learning, LLMs, personalization systems, and large-scale product environments at companies such as Grammarly and Microsoft. That matters because Moss is not trying to solve a toy problem. Building real-time retrieval for modern AI products requires someone who understands both the research side of AI and the reality of shipping systems that people use every day.

That mix of experience shows up in how Moss is positioned. The product does not read like it came from a team chasing hype. It reads like it came from people who have seen what happens when a smart model meets slow infrastructure.

There is also a practical quality to the way Moss has been introduced to the market. Instead of focusing only on abstract AI language, the company talks in terms developers actually care about: sub-10 ms retrieval, offline indexing, local querying, browser, edge, device, cloud, and easy-to-use Python and TypeScript SDKs. Those are not random keywords. They reflect a founder mindset shaped by real engineering tradeoffs.

What Moss actually does and why that matters

Moss is best understood as a real-time semantic search layer built for conversational AI, multimodal AI, voice agents, and copilots. The company’s core value proposition is straightforward: help AI systems retrieve useful context quickly enough that the interaction still feels smooth and natural.

That matters because retrieval sits on the critical path of many modern AI products. Before an assistant can answer a question well, it usually needs access to relevant documents, chat history, internal knowledge, structured data, or live context. If that lookup takes too long, the quality of the experience drops even if the underlying model is strong.

Moss is positioning itself around the idea that search should happen close to where the agent runs rather than bouncing across multiple network layers and external systems. That is a meaningful shift. In simpler terms, the company is trying to remove the awkward delay between a user’s question and the system’s ability to find what it needs.

This is part of why the Moss story feels timely. AI buyers and builders are no longer impressed by intelligence alone. They care about responsiveness, retention, conversion, and whether the product feels ready for real-world use. Speed is not a side benefit anymore. For many AI-native platforms, it is the difference between a demo and a product people stick with.

The Moss vision fits the next wave of voice AI and copilots

One of the strongest parts of the Moss story is how clearly it aligns with where the market is moving.

The next wave of AI products is not just about chatbots sitting in a browser tab. It is about voice agents, AI assistants, copilots, and applications that need to surface the right information without interrupting the flow of work. A product that feels natural in those moments needs more than a good language model. It needs fast knowledge search, reliable agent memory, and access to relevant context at the exact moment it is needed.

That is why Moss’s emphasis on real-time conversation, instant recall, and search runtime matters. The company is not merely trying to be another database layer. It is trying to become part of the runtime that helps conversational systems feel immediate.

This is especially important in voice AI. People are far less forgiving of delay in spoken interactions than they are in text. In a written chat, a brief pause may be acceptable. In a voice setting, even a short wait can make the system feel clumsy. That is one reason a startup like Moss can attract attention quickly. It is working on a pain point users can feel right away, even if they never use the words vector index or embedding inference themselves.

What makes Moss different from older search setups

A lot of AI teams have already spent time with traditional vector databases, custom retrieval pipelines, and cloud-heavy search architectures. Those systems can work, but they often come with tradeoffs that become more obvious as products try to operate in real time.

Moss is presenting a different approach. Instead of asking teams to manage more infrastructure, it leans into zero infrastructure messaging, compact index distribution, and retrieval that can run in the browser, on the edge, on a device, or in the cloud. That flexibility is important because not every AI product runs in the same environment.

For developers, this changes the conversation. It is no longer just about storing embeddings and running nearest-neighbor search somewhere in the background. It becomes about building a retrieval system that feels native to the application itself.

That positioning also helps explain why Moss talks so much about Rust, WebAssembly, on-device performance, and local lookups. These are not just technical details for show. They support the larger point that retrieval should feel fast, lightweight, and close to the user interaction rather than hidden behind a chain of slow network hops.

In a crowded market, clarity like that matters. Moss is not trying to be everything. It is trying to be very good at one painful, high-value problem.

Y Combinator gave Moss another layer of momentum

Another reason Sri Raghu Malireddi and Moss are getting more attention is the Y Combinator connection.

Moss is part of YC F25, and that matters for more than branding. Y Combinator still acts as a strong market signal for early-stage startups, especially in categories where technical credibility and speed of execution matter. Being in YC does not guarantee long-term success, of course, but it does tell the market that the startup has passed a certain threshold of clarity, ambition, and founder quality.

For Moss, the timing also works in its favor. The AI market is moving fast, but it is also becoming more demanding. Investors, developers, and product teams are paying closer attention to whether a startup solves a real infrastructure problem or simply rides the broader AI wave. Moss benefits because its value proposition is specific and easy to understand.

That has likely helped with developer adoption, early visibility, and trust. Public messaging around the company points to early traction, including production pilots, package installs, and use by teams building the future of conversational AI. In a space full of noise, traction tied to clear developer value usually carries more weight than broad branding claims.

Why speed is a real business advantage for Moss

It is easy to describe speed as a technical feature, but for products like Moss it is better understood as a business advantage.

When AI agents retrieve context faster, the user experience improves. When the experience improves, people are more likely to stay engaged. When engagement improves, products have a better shot at stronger user retention, better conversion, and deeper integration into daily workflows.

That is part of what makes the Moss pitch compelling. The company is not saying speed matters just because engineers enjoy performance gains. It is saying speed matters because it changes how AI products feel and how well they work in the real world.

This is also where Sri Raghu Malireddi’s founder story connects cleanly to the product. A founder with experience in shipping real systems at scale is more likely to understand that performance is not separate from product quality. In AI, especially, people do not judge the model in isolation. They judge the whole experience. That includes latency, context retrieval, and whether the assistant feels genuinely useful.

If Moss can keep delivering on that promise, its technical positioning could translate into something more durable than early hype.

Moss is rising because it solves a problem developers actually feel

Many startup stories sound impressive on paper but feel abstract in practice. Moss does not have that problem. The issue it is addressing is concrete. Developers building voice agents, copilots, multimodal apps, and AI assistants know exactly what it feels like when retrieval becomes the bottleneck.

That is a big part of why the company has room to grow. It sits at the intersection of several trends that are likely to keep expanding: semantic search, AI infrastructure, edge AI, on-device AI, and developer tools for production-ready AI products.

The company also benefits from a clean story. Sri Raghu Malireddi is not being presented as someone building yet another generic AI startup. He is building Moss around a very specific need inside the AI ecosystem. That makes the narrative stronger, but more importantly, it makes the product easier to understand.

For startup founders, that is a useful reminder. The market does not always reward the broadest vision first. Very often, it rewards the clearest one.

What Sri Raghu Malireddi’s Moss journey says about modern AI startup success

There is a reason the Moss story feels more substantial than many early AI startup narratives. It reflects a pattern that keeps showing up in the strongest companies in this category.

First, solve a problem that matters to real users, even if those users happen to be developers. Second, make the value obvious. Third, build around a shift that is already underway rather than one that might appear years from now.

Sri Raghu Malireddi and Moss fit that pattern well. The company is tied to clear market demand around real-time retrieval, AI agent performance, voice AI, and copilot infrastructure. Its product language is practical. Its technical choices support the product story. Its YC backing adds visibility. And its category, real-time search for AI agents, is only becoming more relevant as more software starts acting like an assistant rather than a static application.

That is what makes Moss feel like a startup with real upward momentum. It is not trying to win attention by being louder than everyone else. It is building around a real limitation inside modern AI products and turning that limitation into an opportunity.

For now, that may be the clearest sign of why Sri Raghu Malireddi is gaining attention. He is not just building in AI. He is building in one of the parts of AI that determines whether the experience feels fast, useful, and ready for everyday use.

And in this market, that is a strong place to be.